Human hearing accounts for different volumes and amplitude separately since the human ear has certain subjective flaws when it comes to perception.

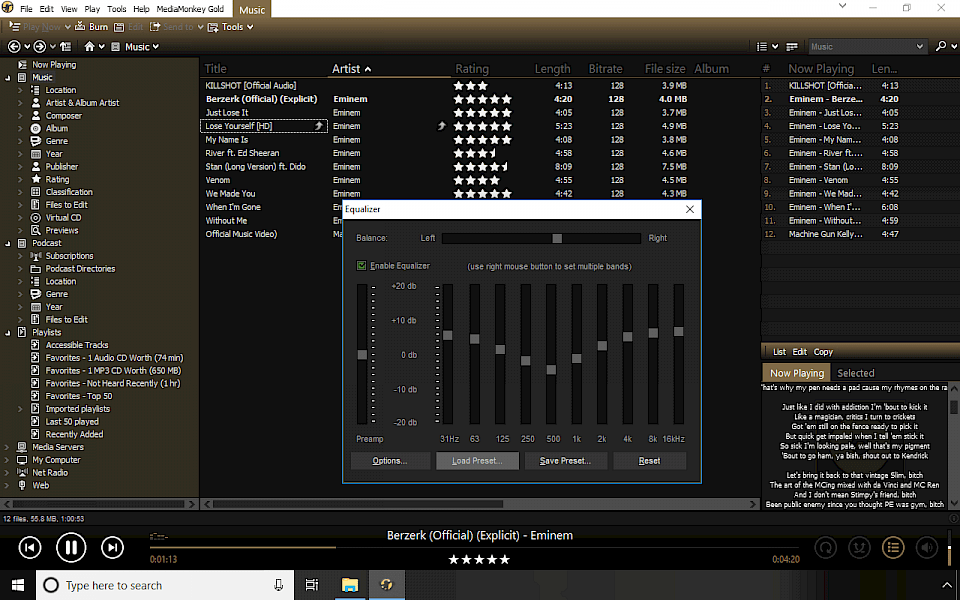

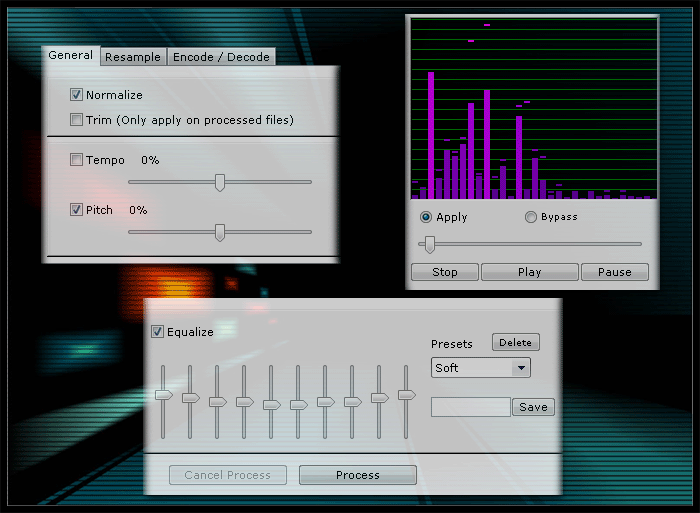

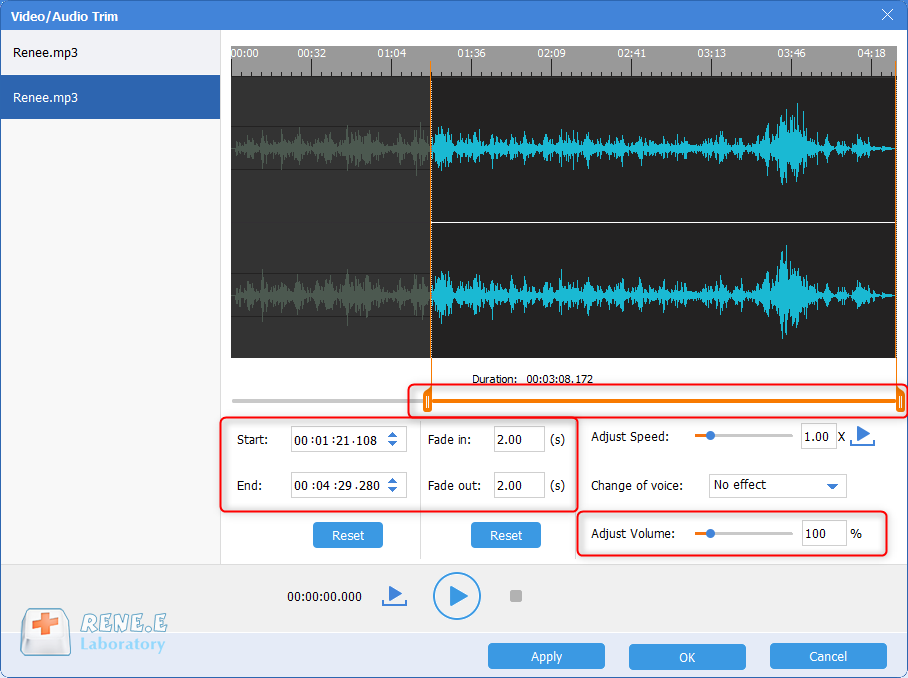

The loudness normalization process is more complex since it takes into account the human perception of hearing. The normalize effect through peak normalization is strictly based on peak levels, rather than the perceived volume of the track. Basically, the peak normalization processes audio based on the upper limit of a digital audio system, which usually equates to normalizing max peak at 0 DBs. This process finds the highest PCM value or pulse-code modulation value of an audio file. The dynamic range remains the same, and the new audio file sounds more or less the same outside of the track transforming into a more loud or quiet audio file. Peak normalization is a linear process where the same amount of gain is applied across an audio signal to create a level consistent with the peak amplitude of the audio track. Most of the time, normalizing audio boils down to peak normalization and loudness normalization. There are different types of audio normalization for various audio recording use cases. The entire recording atmosphere and sound should be fairly consistent throughout, so you might have to go back and adjust gain within the context of all songs.

You might also normalize and edit audio files upon completing a music project like an album or EP. This is particularly essential in processes like gain staging, where you set audio levels in preparation for the next stage of processing. You can also normalize audio to set multiple audio files at the same relative level. This can be incredibly useful while importing tracks into audio editing software or to make an individual audio file louder.Ĭreate A Consistent Level Between Several Audio Files Targets for the most popular streaming services are as follows:Įach engineer has their own philosophy when it comes to determining the target level for each master, but these standardizations should be taken into account.Īudio normalization can be used to achieve the maximum gain of each audio file. Every platform has a different target level, so it's not uncommon to have different masters for various streaming platforms. This way, listeners won't have to drastically turn the volume up or down when switching from one song to another. Streaming services set a standard normalization level across songs hosted on their music libraries. So why is it important to normalize your audio files? Here are a few scenarios in which loudness normalization is a must: Audio normalization is essential for any digital recording, but there isn't a one-size-fits-all approach. Therefore, every single audio clip should be approached differently when it comes to the normalization process. For instance, a peak amplitude might become squashed or distorted through the process of normalization.

It's worth noting that songs with a larger dynamic range can be more challenging to normalize effectively. For instance, you might have multiple tracks within an album or EP. It can also be used to create more consistency across multiple audio clips. This brings your audio file to a target perceived amplitude or volume level while preserving the dynamic range of the tracks.Īudio normalization is used to achieve the maximum volume from a selected audio clip. When you normalize audio, you're applying a certain amount of gain to a digital audio file. With the advent of DSPs or digital streaming services like Spotify and Apple Music, audio normalization has become an essential part of the process.īut what does it mean to normalize audio? And how can you normalize your own digital audio files? Below, we'll share exactly how to normalize audio and why it's a key step in modern music-making. Over the past three decades, the reality of how we consume music has completely shifted.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed